Sketches

Sketches

Sketches

Design Decisions

Design Decisions

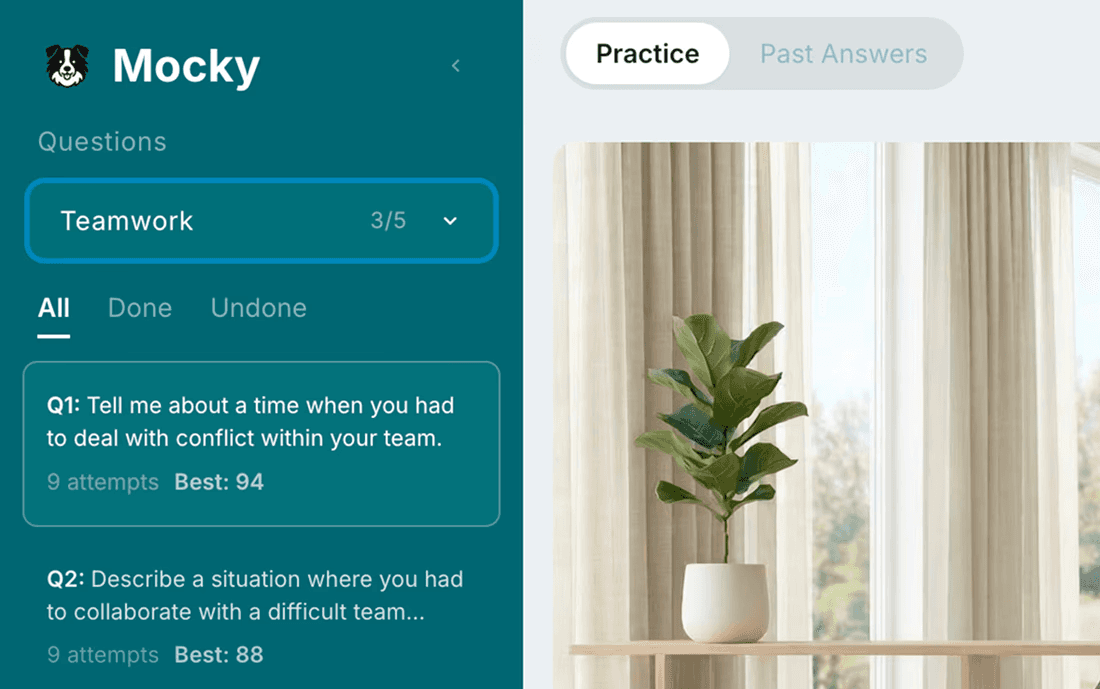

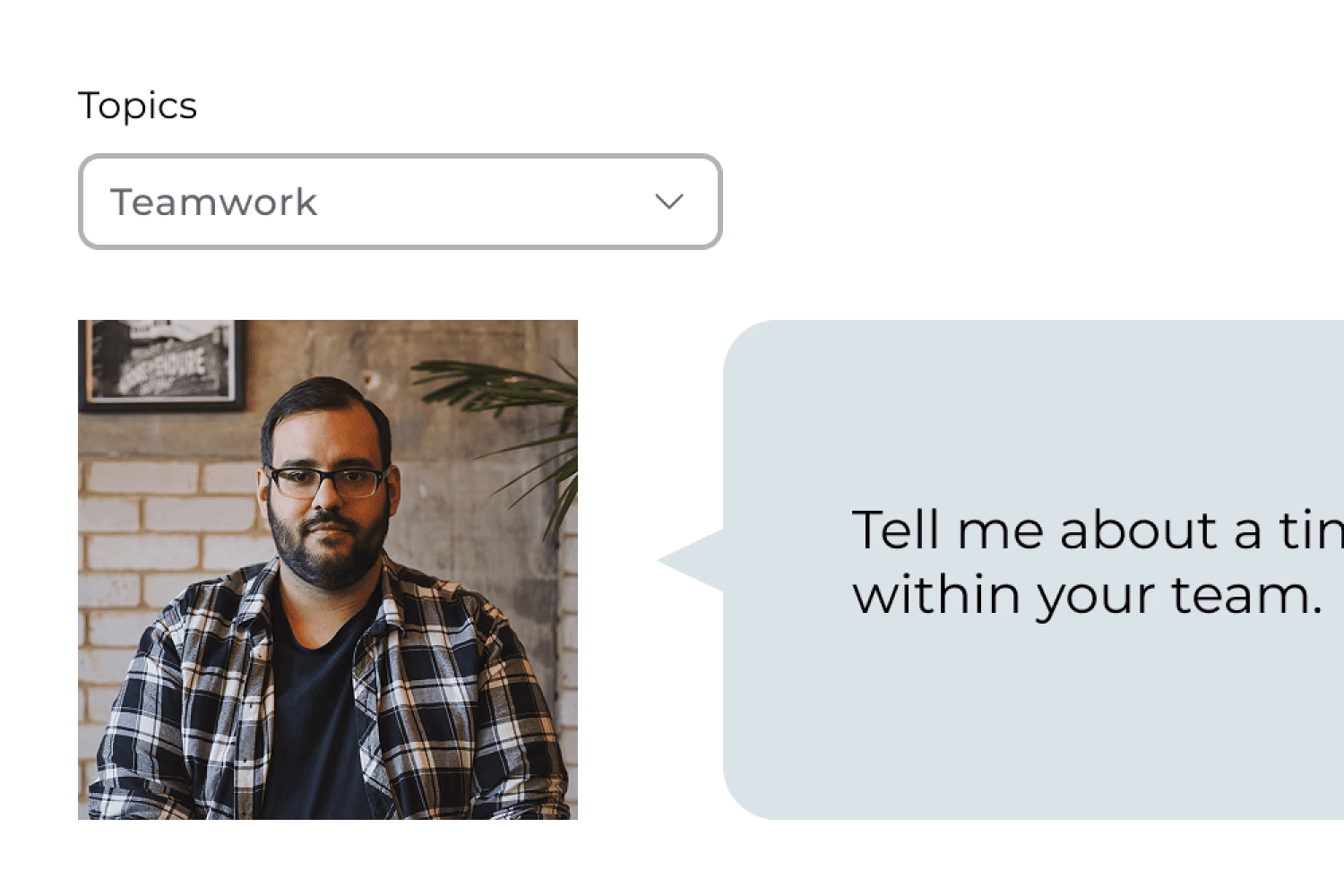

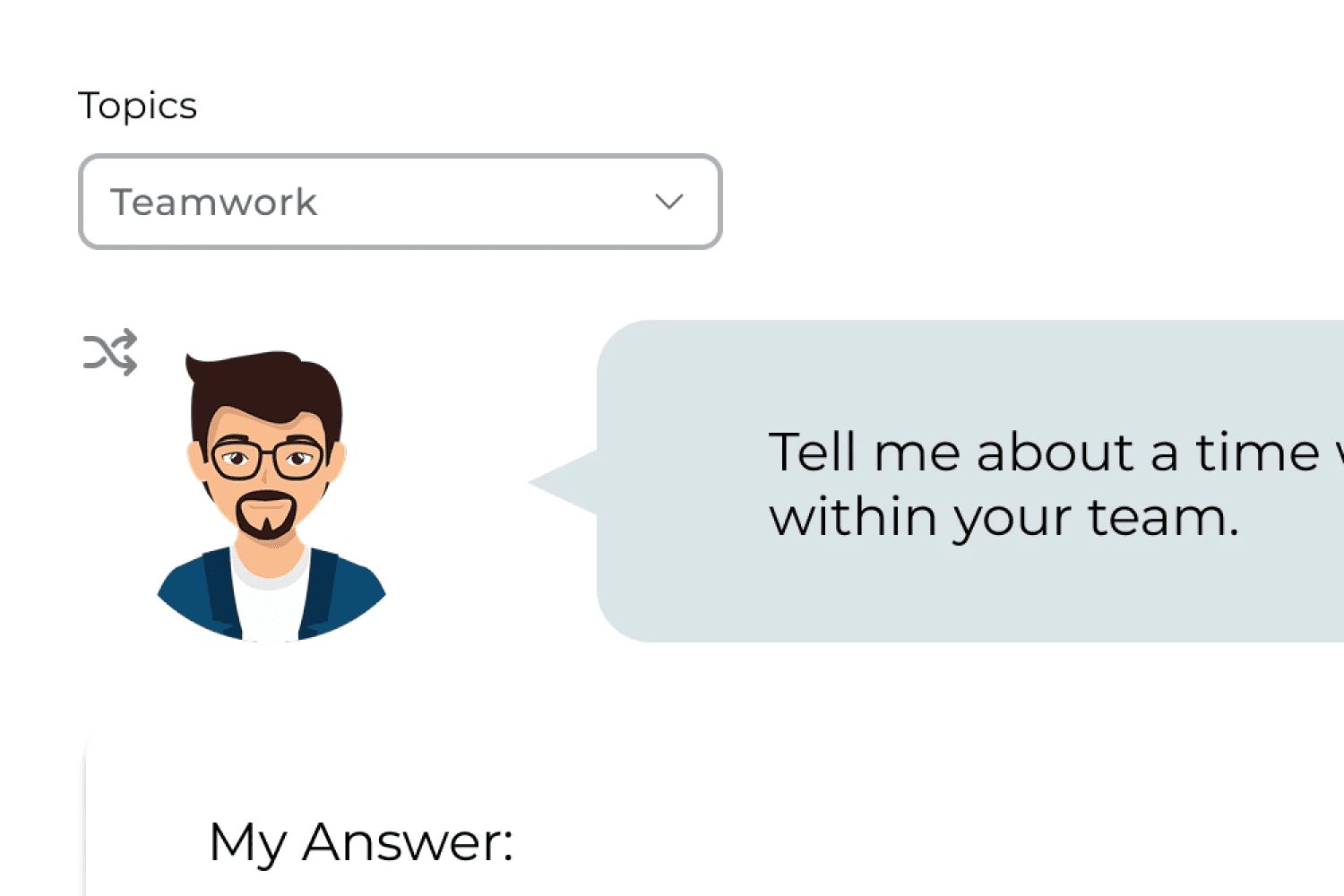

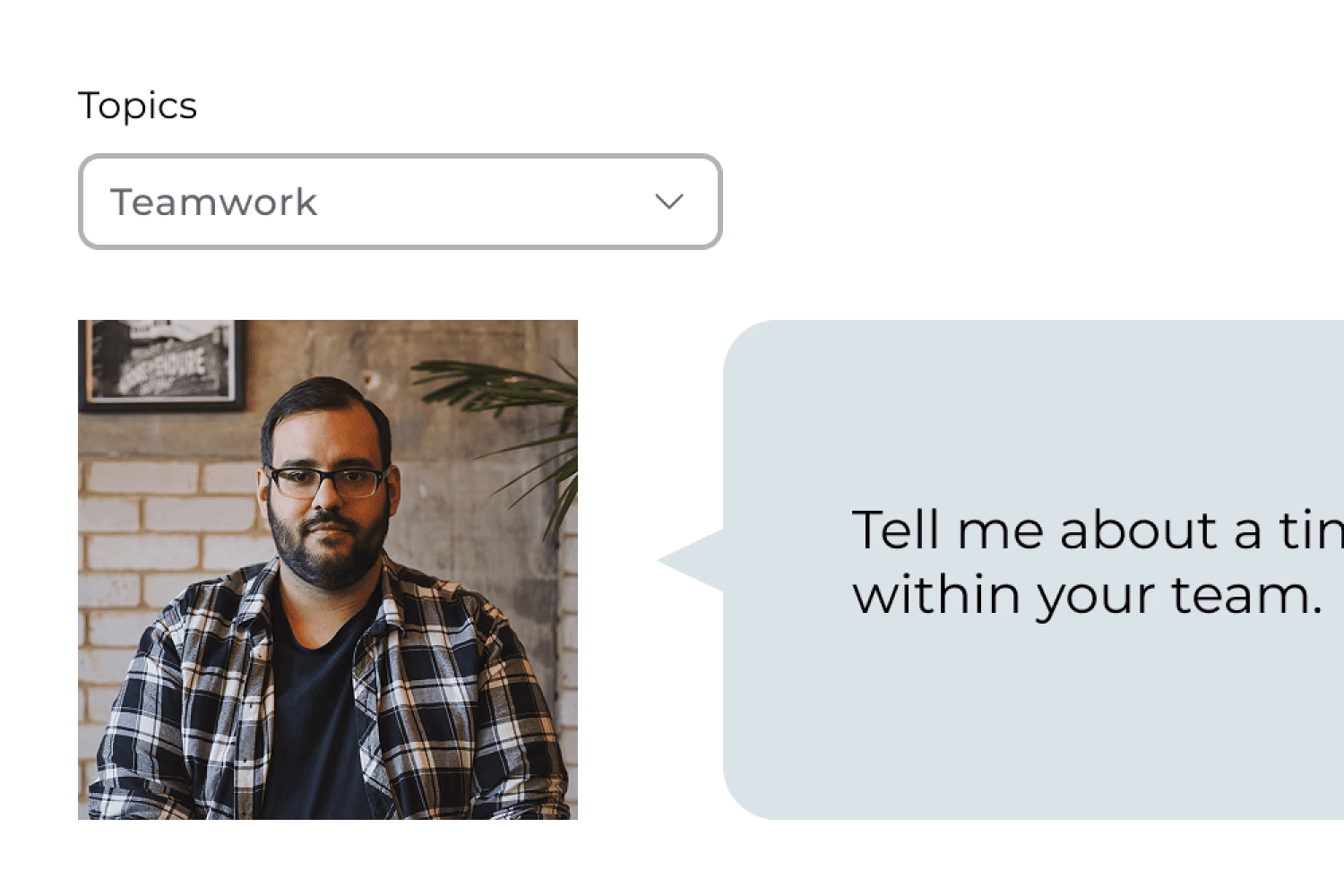

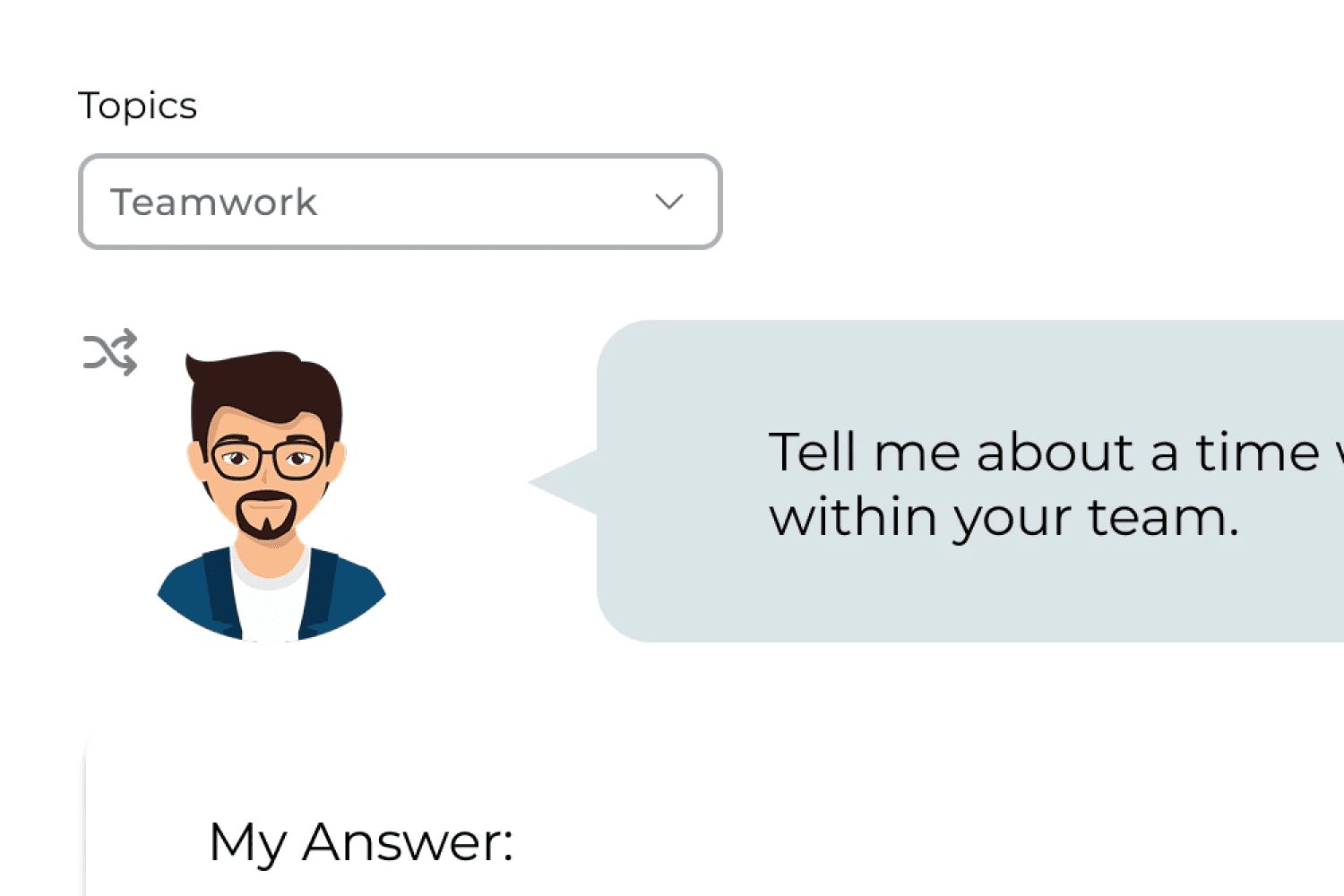

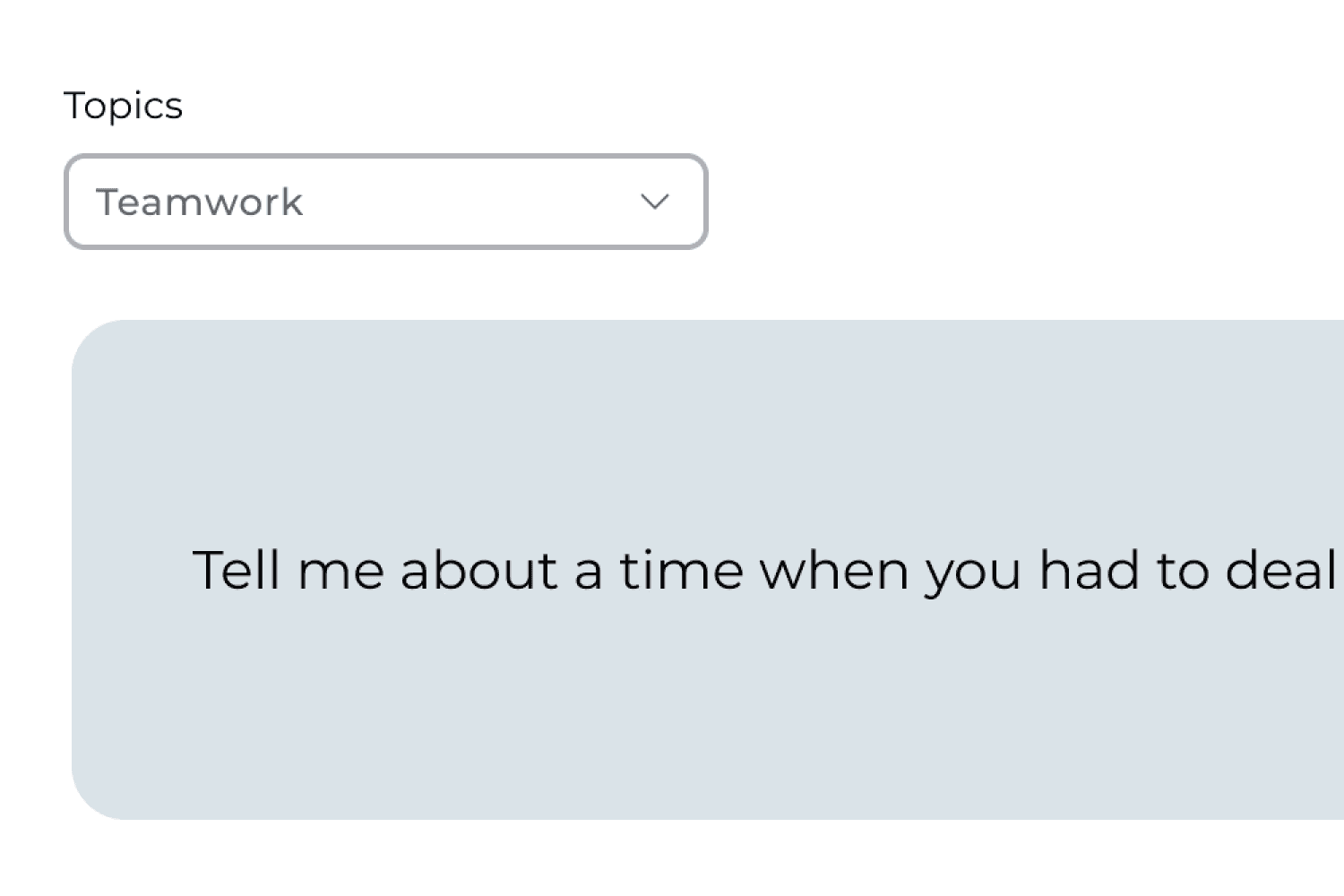

A/B Testing #1

What type of interviewer presence works best?

A/B Testing #1

What type of interviewer presence works best?

🌟 Users preferred realistic human avatars, as they created a more authentic interview experience.

Realistic human avatar

Illustrated avatar

No avatar

Realistic human avatar

Illustrated avatar

No avatar

Realistic human avatar

Illustrated avatar

No avatar

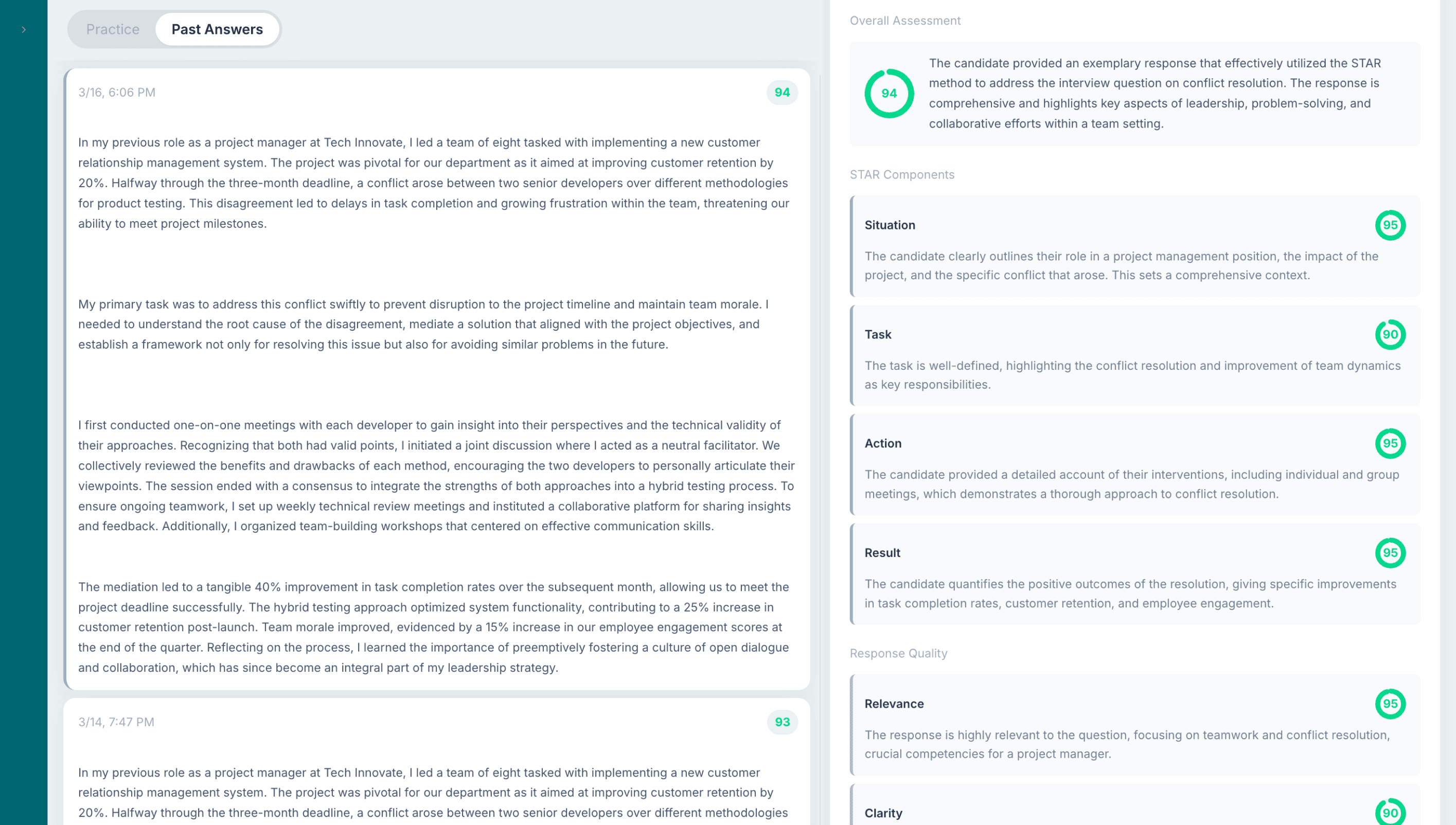

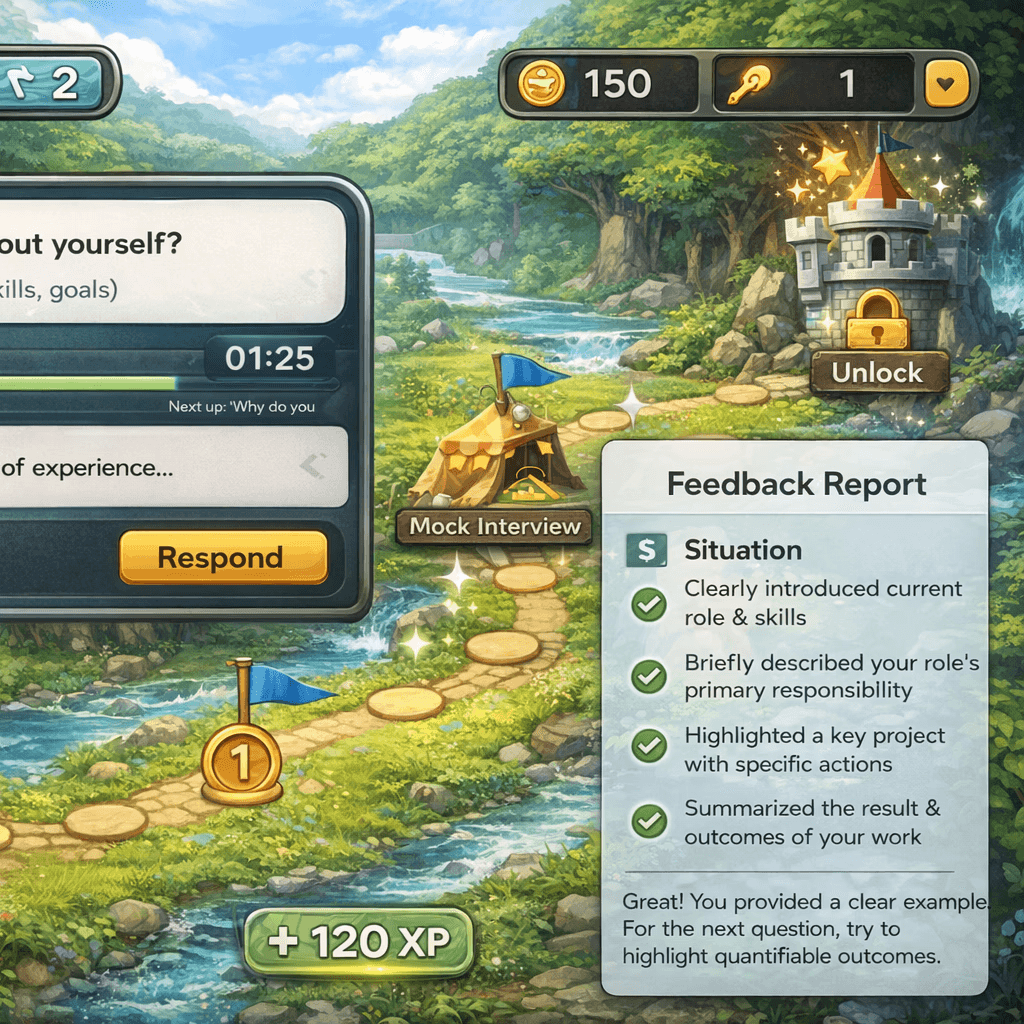

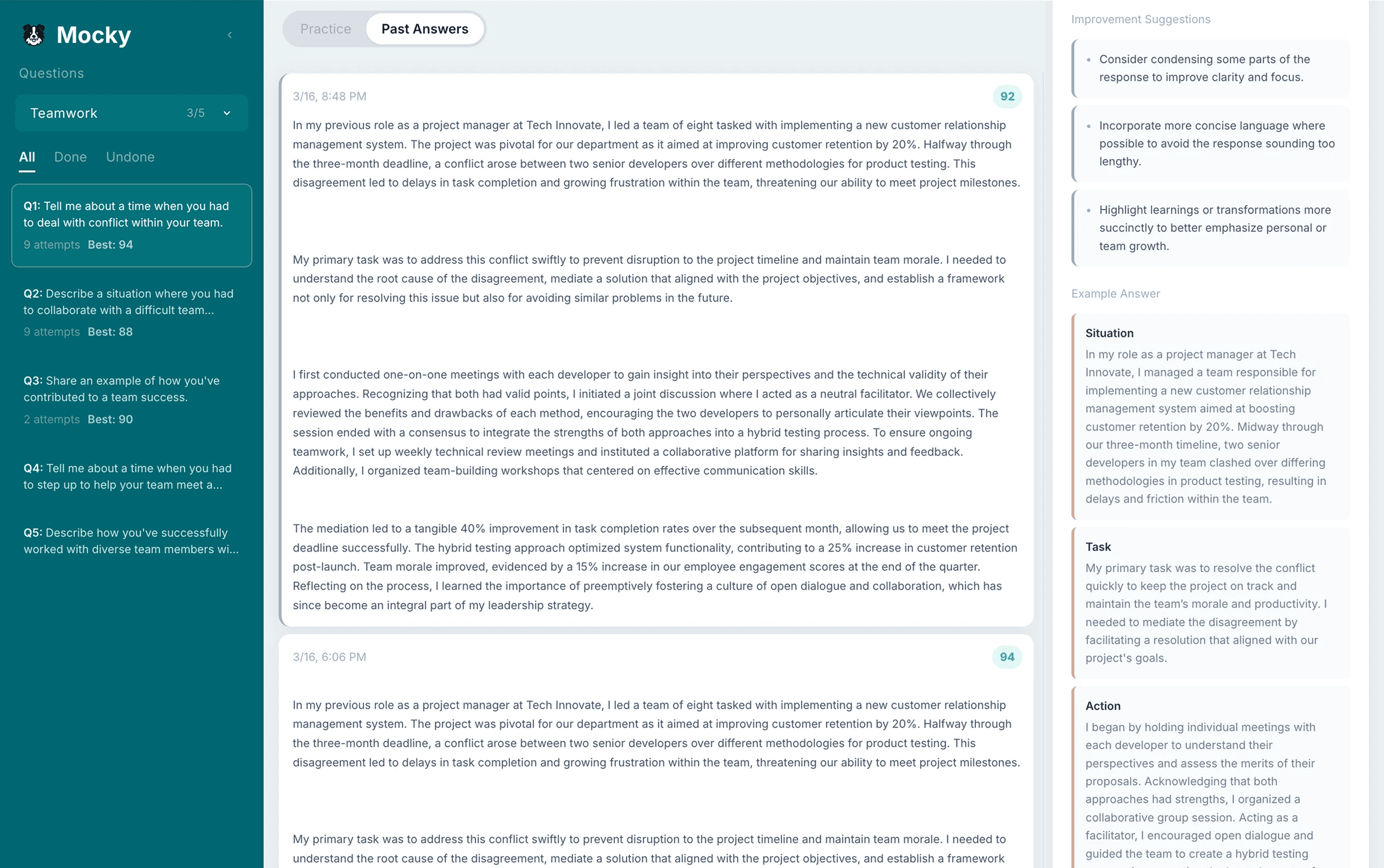

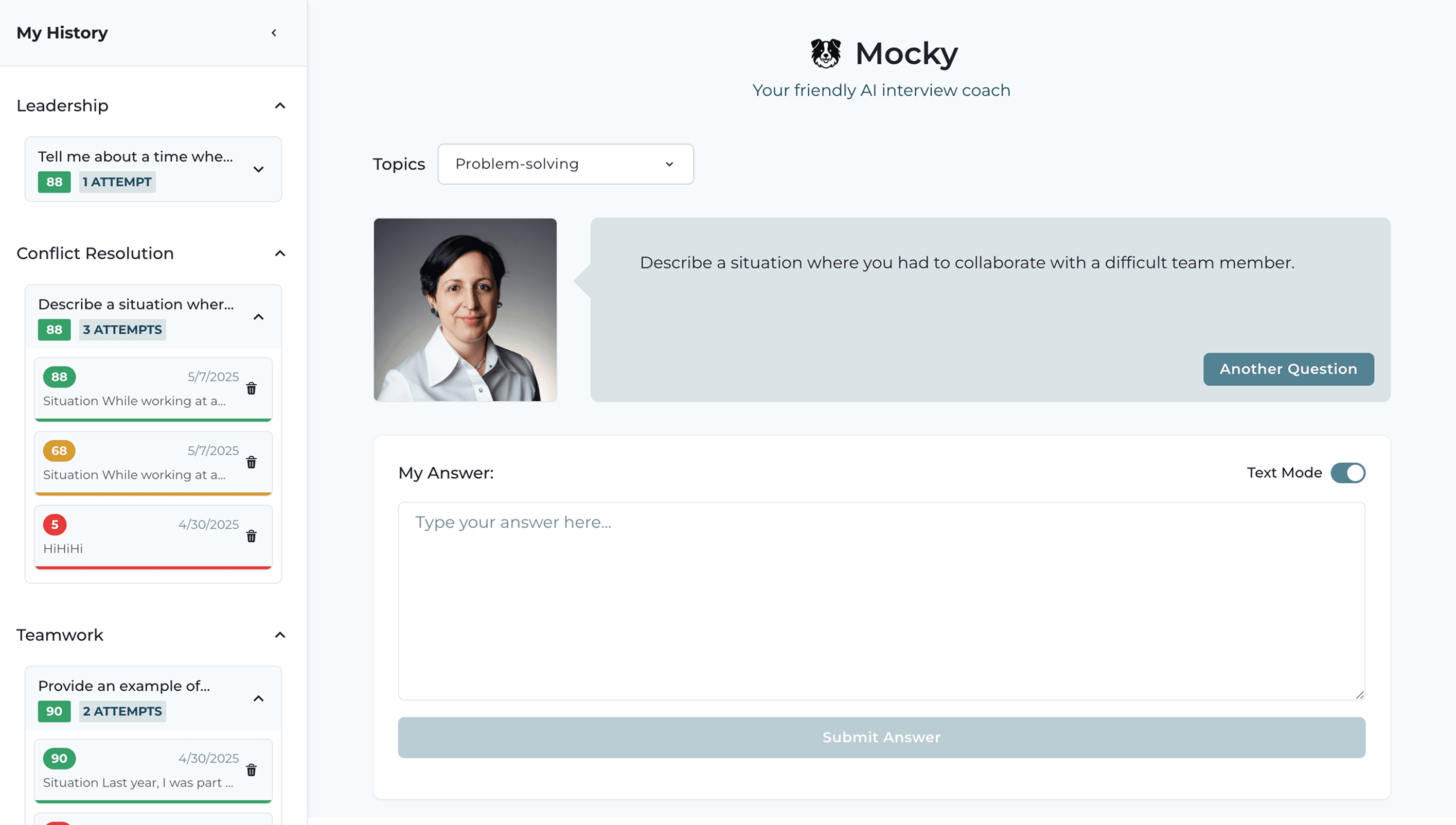

A/B Testing #2

How should answer history be organized?

A/B Testing #2

How should answer history be organized?

🌟 Users preferred grouping answers by topic, as it made it easier to review and improve specific skills.

Realistic human avatar

Illustrated avatar

Realistic human avatar

Illustrated avatar

Realistic human avatar

Illustrated avatar

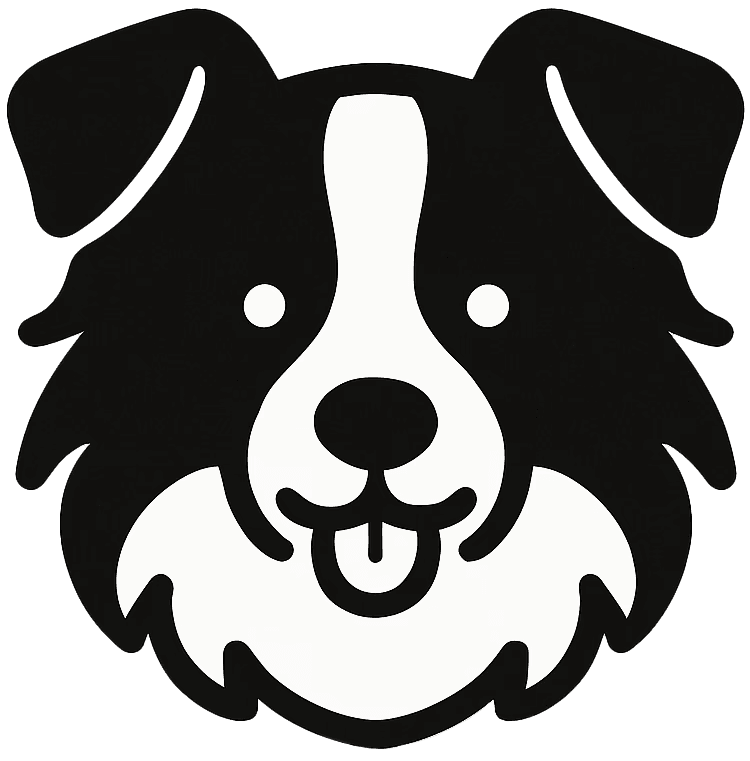

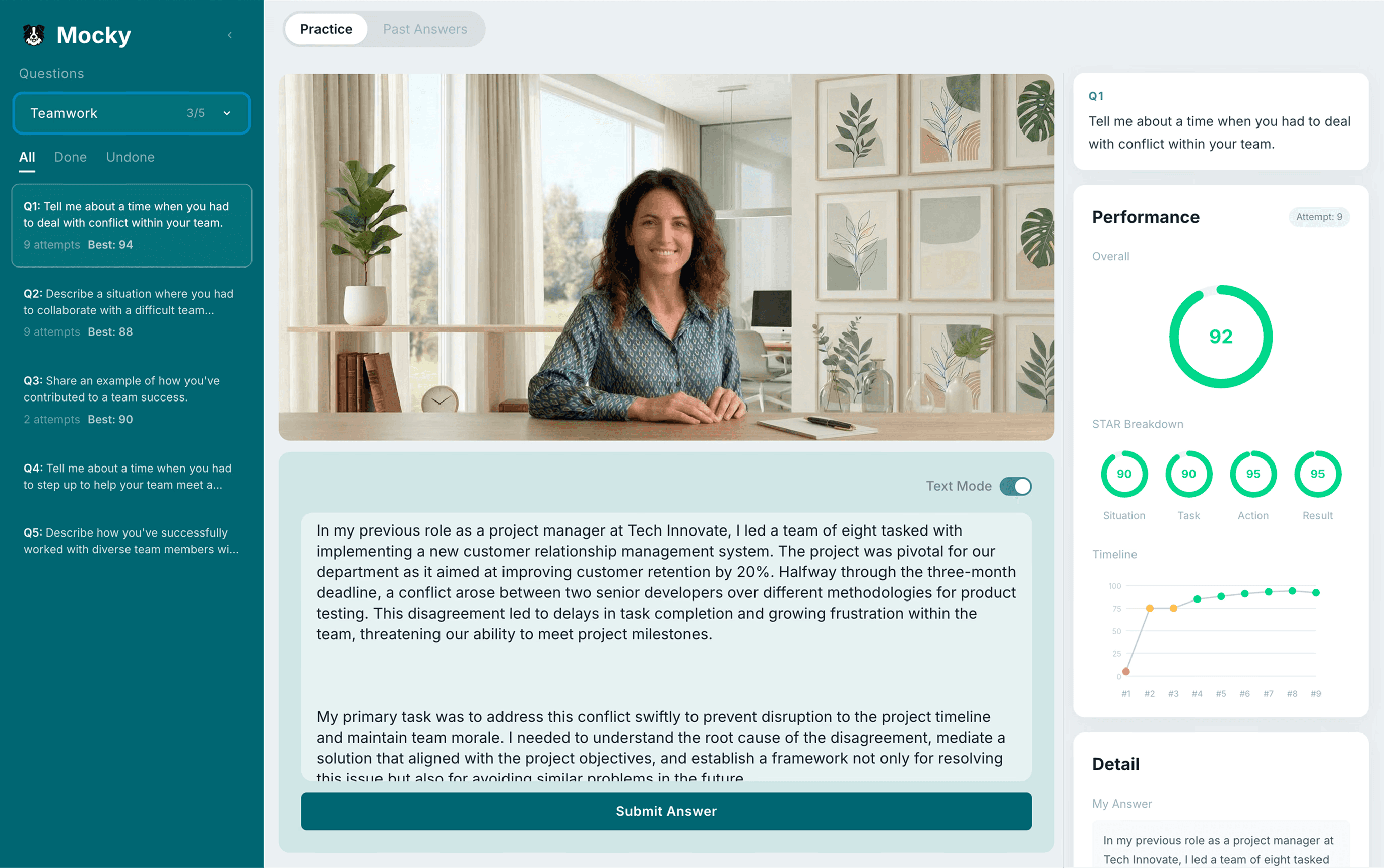

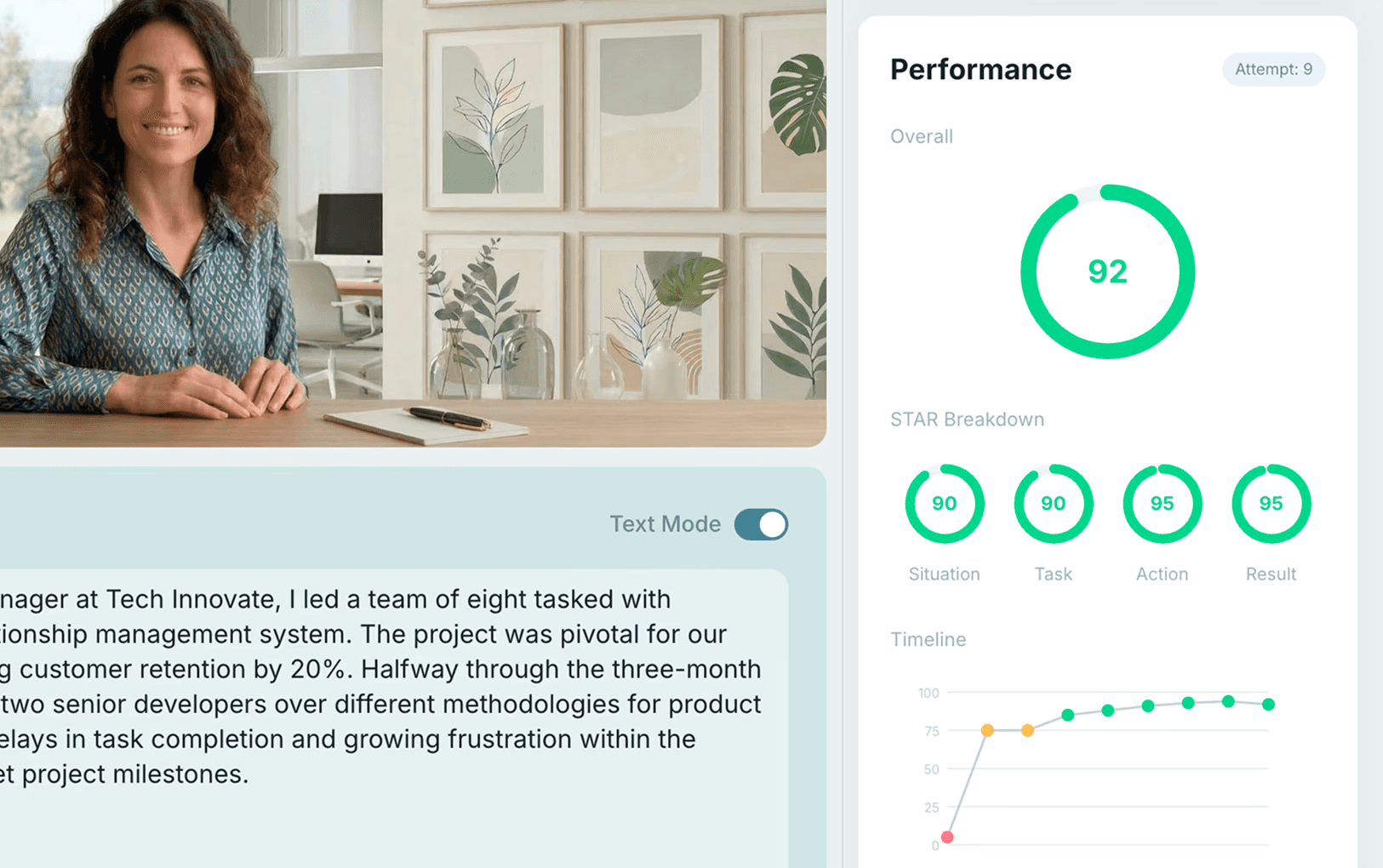

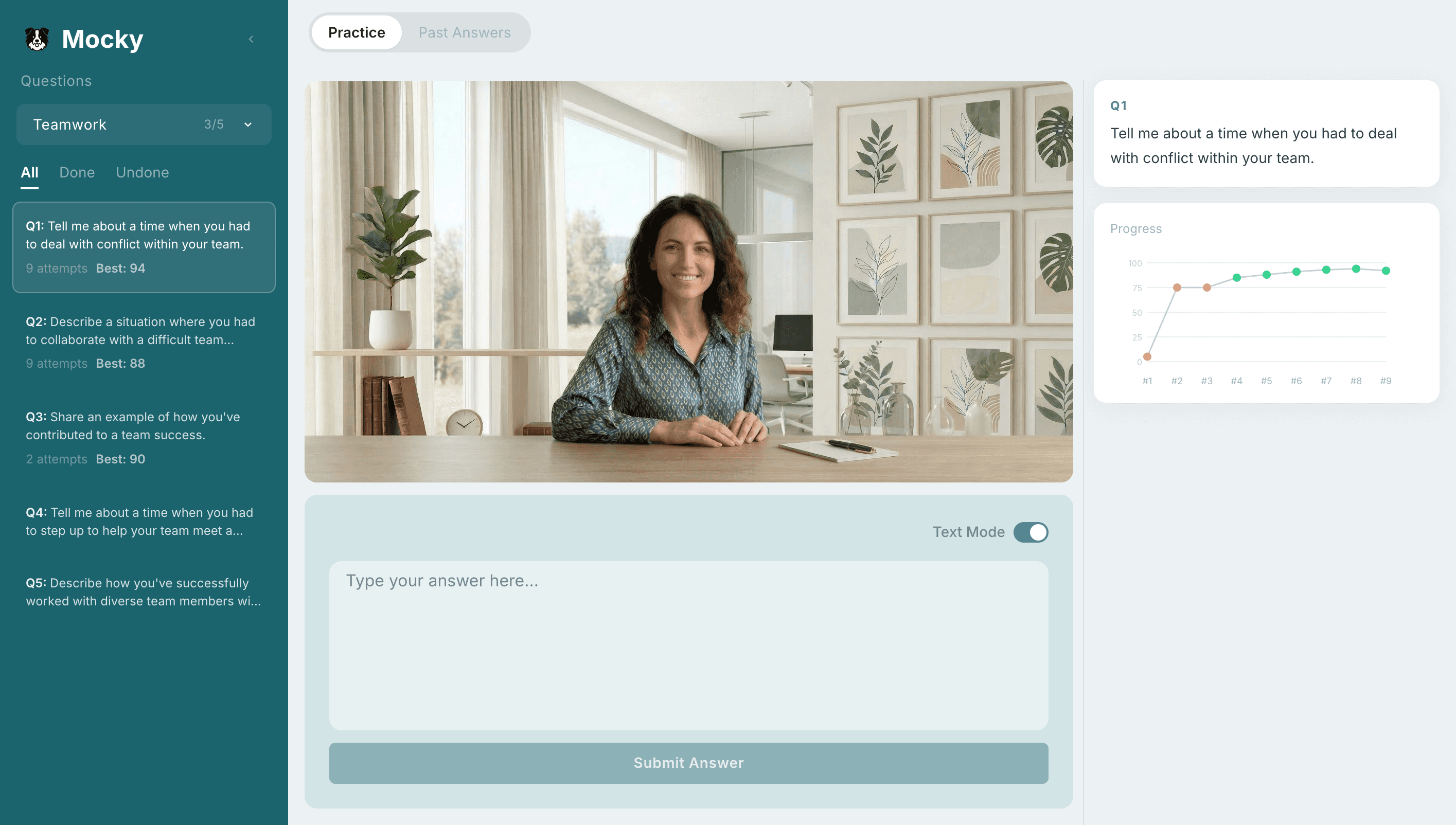

Design Iterations

Design Iterations

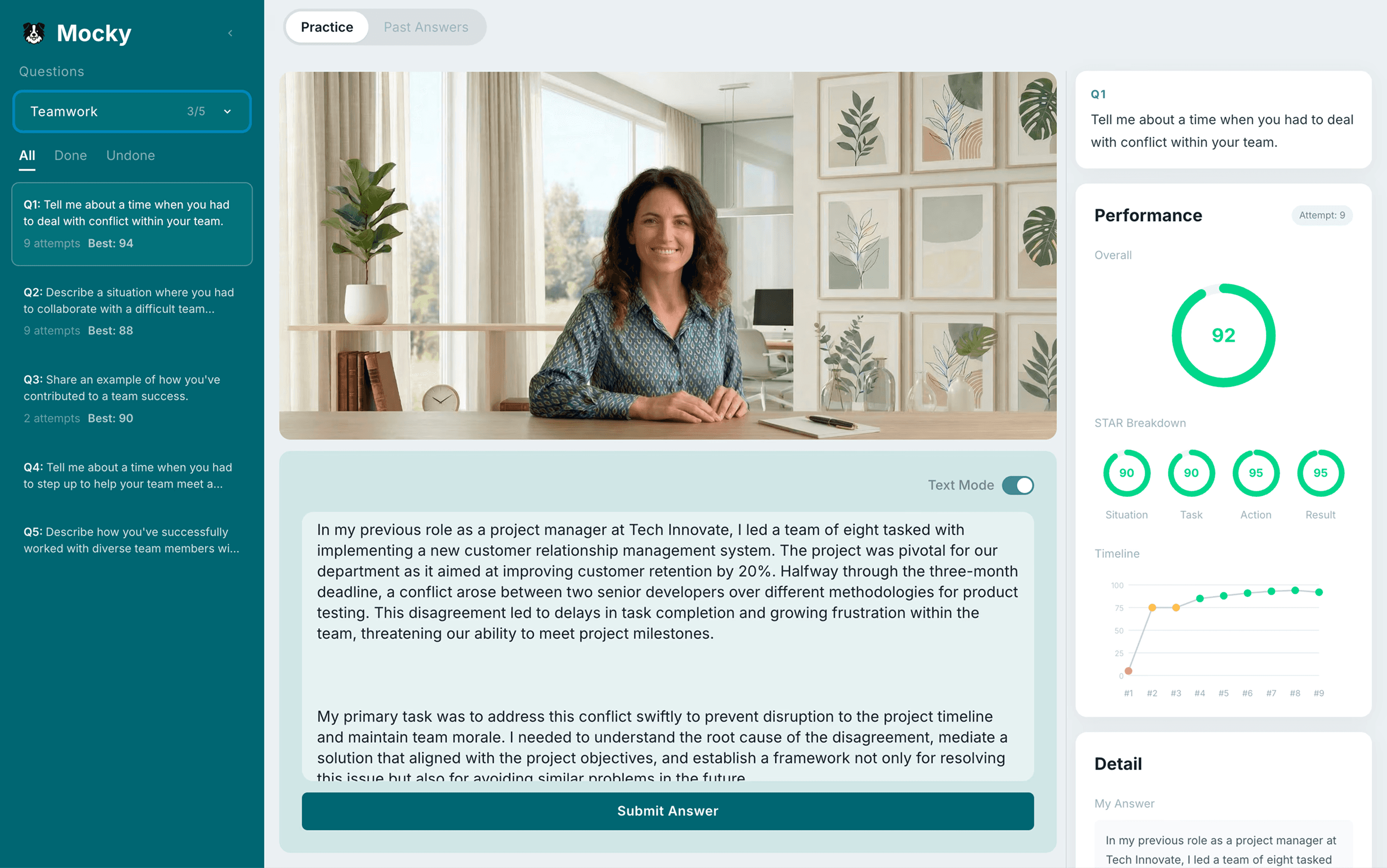

User Feedback

"I want to see all questions at once, not one at a time"

"It feels more like texting than a real interview"

"It’s hard to track how much I’ve improved"

User Feedback

"I want to see all questions at once, not one at a time"

💡 Added per-question progress visualization to make improvement visible

"It feels more like texting than a real interview"

💡 Replaced avatar-based interaction with a more realistic, interview-like presence

"It’s hard to track how much I’ve improved"

💡 Replaced random AI questions with a structured question list for flexible navigation

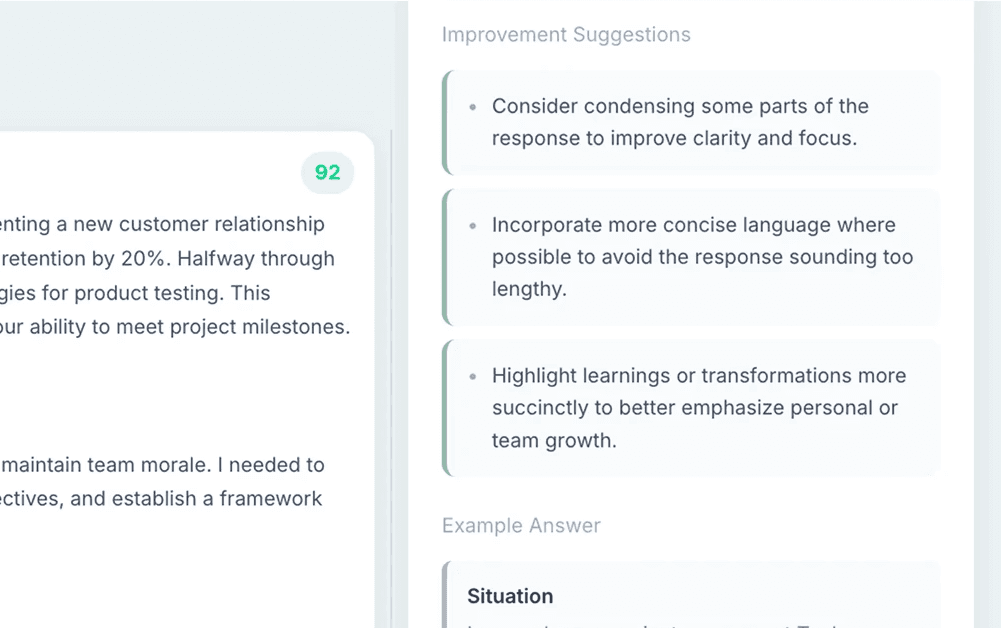

User Feedback

"I want to compare my answer with the feedback"

User Feedback

"I want to compare my answer with the feedback"

💡 Replaced vertical scrolling with a resizable side-by-side layout for easy comparison